Automated AWS Incident Response — The next episode

Background

We’ve written quite a few blogs on AWS incident response [1],[2] and most recently on how you can use Sigma and Athena for incident detection and response. Today, we’re proud to announce a large update of Invictus-AWS, our open-source tool for AWS IR. With this tool we aim to further automate the acquisition and analysis of AWS log data relevant for investigations.

In this blog we’ll go over the new features and changes we’ve made. Keep on reading if you want to know how you can automatically acquire relevant data and perform automated analysis of CloudTrail logging. Before we dive into this, a big shout out to Invictus’ newest addition the one and only Benjamin who’s responsible for these awesome new features.

Invictus-AWS

As you know, we are a cloud incident response company. In this business, we need tools to help us to get an overview of the cloud infrastructure we’re investigating and what is available to us. This is why we developed our own tool called Invictus-AWS for incident response on AWS. It’s been around for quite some time and we think it has huge potential.

We are happy to announce to you that, in the last few months, we’ve made some enhancements to the tool, so it can better answer the needs of people doing an investigation on AWS.

As said before, the tool was initially made to help an investigation on AWS, its main features were:

- Enumeration of most of the services running in an AWS environment.

- Collection of configuration details about all of the services returned by the above feature.

- Collection of relevant logs useful for further analysis.

Let’s discuss the most important changes we’ve made…

CloudTrail logs analysis

The main feature of this new version is the ability of the tool to analyze CloudTrail logs in Athena, with a set of predefined queries. This feature takes as input an S3 bucket folder containing all the CloudTrail logs and run Athena queries and output the data to the terminal and to a file.

The idea is that if you have a set of detection rules in Athena SQL format, ourtool will run the queries against the CloudTrail logs and quickly tell you where interesting events are.

Approximately 25 queries in queries.yaml (link) are executed against the CloudTrail logs.

Some examples of this feature in action. To acquire all the available logs across all regions and analyze the results with the default queries:

$python3 invictus-aws.py -w -s -A

Warning this can take some time in large environments.

The -w flag writes the output to S3 storage, the -s flag runs all steps (more on that later) -A is used to run against all regions in the AWS account.

Run queries on an existing set of CloudTrail logs and write the results to a new location:

$python3 invictus-aws.py -r eu-west-3 -w -s 4 -o outputdirectory/

The -rflag is used to specify the region where the CloudTrail logs are stored that we want to analyze. With -swe specify that we only want to run the analysis step. With the -oflag we specify where the results of the analysis function are stored.

The below gif shows that for several rules there are hits that need to be analyzed:

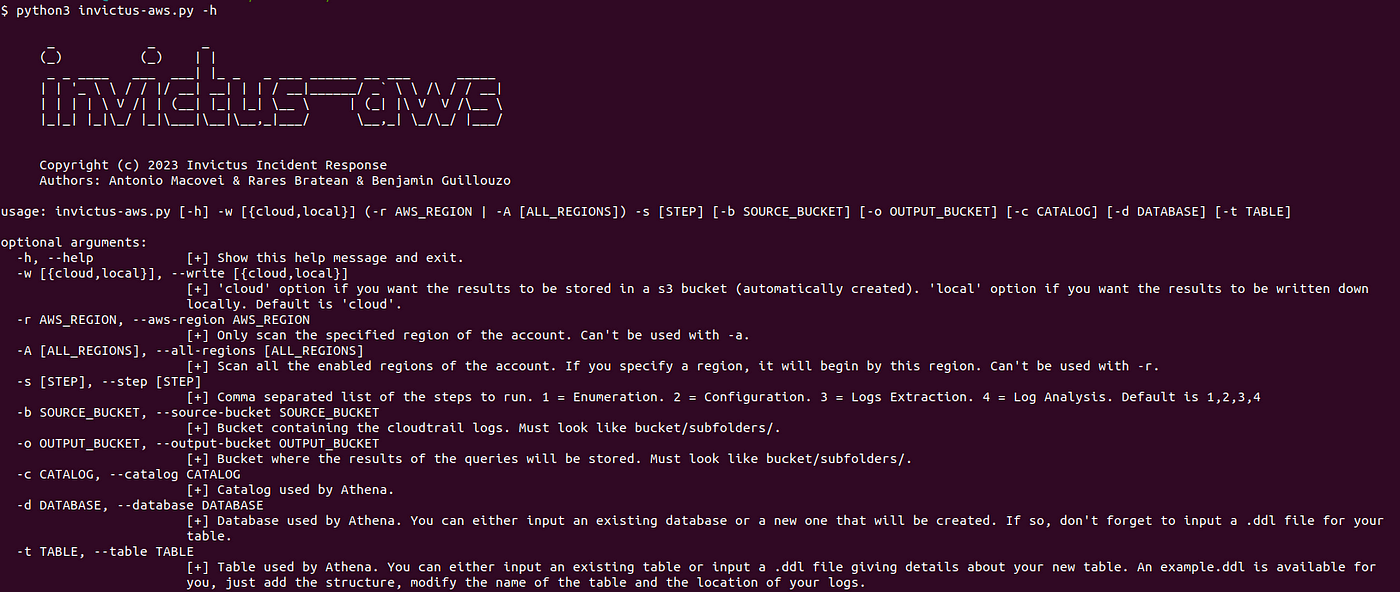

If you want to see all available options just run:

$python3 invictus-aws.py -h

Multi-Region acquisition and Storage choices

Let’s talk about the region choice first. In the previous version of the tool, you were able to run the tool on only one region at a time. Now, you can choose to run the tool on one region that you will specify or on all the activated regions in the AWS account. With this option, you will also be able to specify a region, so the tool will start with that one, before scanning all the other regions.

Run against eu-west-3 only:

$python3 invictus-aws.py -r eu-west-3

Run against all activated regions, beginning with eu-west-3:

$python3 invictus-aws.py -A eu-west-3

Now let’s talk about the storage location. The tool has always been working with its results being written to an automatically created S3 bucket in the account. In the new version, you will be able to choose between writing the results to a S3 bucket or your local storage.

If you choose to write the results to S3, you don’t need to specify it’s the default:

$python3 invictus-aws.py -w

For local storage:

$python3 invictus-aws.py -w local

The local storage option creates a folder called resultswith the output of each step per region.

Execution logic

The tool now allows for individual steps of the tool to be executed. The tool has 4 main steps:

- Service enumeration

- Service configuration details collection

- Log acquisition

- Log analysis

In the previous version, it was all or nothing. From now on, you will be able to choose to run only the steps you want to run, and they’re all independent. This is also useful in case one of the steps doesn’t work or crashes the other steps will still work.

In this example, we run only the service enumeration and service configuration details in one region and store the results locally:

$python3 invictus-aws.py -r eu-west-3 -w local -s 1,2

Here is an example showing the above in action:

Other improvements

We have improved the error handling in the tool. Now if a bug appears (it won’t but you never know right ?), the tool will keep running without crashing.

All calls to AWS API have been improved, previously each AWS API call returned only the first 100 results. For a small infrastructure it wouldn’t be a real problem, but we wanted the tool to be useful for everyone.

Conclusion

With this update, we hope the tool will have a bigger impact and be more useful for you people to use it during your AWS incident responses. Do you have suggestions or do you want to understand how the tool works? Visit our GitHub page https://github.com/invictus-ir/Invictus-AWS

A lot of love, sweat and some tears went into the development of this free tool. If you want to give something back to us please consider any of our commercial services such as a retainer or an assessment of your environment.

Be ready for the next cloud incident.